Contents Audio Samplesġ.1 Adaptation voice on VCTK, LJSpeech and LibriTTSĢ.2 Utterance-level Visualization AnalysisĢ.3 Finetune CLN and Finetune Other Decoder ParametersĪdaptation voice on LibriTTS, VCTK and LJSpeech Experiment results show that AdaSpeech achieves much better adaptation quality than baseline methods, with only about 5K specific parameters for each speaker, which demonstrates its effectiveness for custom voice. We pre-train the source TTS model on LibriTTS datasets and fine-tune it on VCTK and LJSpeech datasets (with different acoustic conditions from LibriTTS) with few adaptation data, e.g., 20 sentences, about 1 minute speech. 2) To better trade off the adaptation parameters and voice quality, we introduce conditional layer normalization in the mel-spectrogram decoder of AdaSpeech, and fine-tune this part in addition to speaker embedding for adaptation. Specifically, we use one acoustic encoder to extract an utterance-level vector and another one to extract a sequence of phoneme-level vectors from the target speech during pre-training and fine-tuning in inference, we extract the utterance-level vector from a reference speech and use an acoustic predictor to predict the phoneme-level vectors. We design several techniques in AdaSpeech to address the two challenges in custom voice: 1) To handle different acoustic conditions, we model the acoustic information in both utterance and phoneme level. In this work, we propose AdaSpeech, an adaptive TTS system for high-quality and efficient customization of new voices. Custom voice presents two unique challenges for TTS adaptation: 1) to support diverse customers, the adaptation model needs to handle diverse acoustic conditions which could be very different from source speech data, and 2) to support a large number of customers, the adaptation parameters need to be small enough for each target speaker to reduce memory usage while maintaining high voice quality. AbstractĬustom voice, a specific text to speech (TTS) service in commercial speech platforms, aims to adapt a source TTS model to synthesize personal voice for a target speaker using few speech from her/him. Mingjian Chen* (Microsoft Azure Speech) Xu Tan^* (Microsoft Research Asia) Bohan Li (Microsoft Azure Speech) Yanqing Liu (Microsoft Azure Speech) Tao Qin (Microsoft Research Asia) Sheng Zhao (Microsoft Azure Speech) Tie-Yan Liu (Microsoft Research Asia) Equal contribution.The quality of a voice trained on a very small data set won't be quite up to par with our custom voices, but it can be a great way to produce a proof of concept or power a hobby project.AdaSpeech: Adaptive Text to Speech for Custom Voice Our TTS is currently limited to English, but we can produce custom voices for your brand, and we offer an affordable subscription tier that lets you train your own TTS voice with as little as 5 minutes of data. Our system works faster than real-time, so there's no waiting for your audio to be ready - by the time you can send a request to your streaming URL, the first chunks of audio should be ready, and playback won't get ahead of synthesis. Our mobile libraries have convenience methods for automatically streaming the audio to your local or web device. You send us either plain text or text formatted with SSML or Speech Markdown if you need fine control over the result, and we'll send you a URL where you can stream your result for the next 60 seconds.

Spokestack's current approach to TTS is cloud-based.

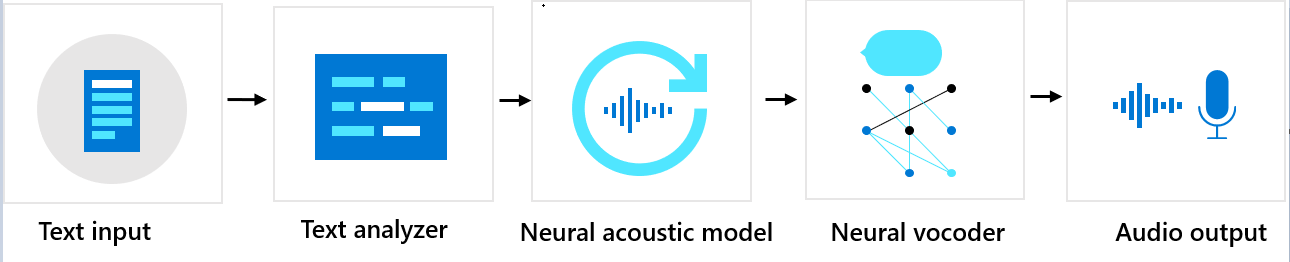

Natural speech synthesis is still a computationally intensive task the models that approach human performance require too many resources to run on a mobile device, but the field is advancing rapidly. We've come a long way since then, and today neural networks can produce speech nearly indistinguishable from a human speaker in both reproduction of individual letters and the qualities that make speech sound natural - things like cadence, intonation, and stress - collectively known as prosody. Synthesizing speech might be the oldest field in voice technology, with early efforts potentially dating back to the Middle Ages. Personalized Speech Specific to Each Potential User How Does Text-to-Speech Work? TTS transforms text input into audio that mimics a human speaker reading it aloud.